Traditional Mathematics

Algorithms using traditional mathematics are those that are used with logical flow to obtain a useful result. The founder of Codotek Innovation Inc. has a PhD in Physics. During his Doctorate, he used a decomposition in spherical harmonics vectors to study the behavior of light on birefringent and nonlinear nanoparticles. These mathematics were simply specific to the problem. The key here is to always use the best mathematical tool that is suitable for the problem. Experience and good working method avoid Maslow's hammer: "When the only tool you have is a hammer, it's tempting to treat everything like a nail."

Example of signal analysis

For example, for signal analysis. Any sensor reports raw information about a situation or event. The environment thus studied may or may not be stimulated. You can use an amplifier with lock-in to filter out noise from a weak stimulated acquisition signal. Thus, we can work on the low frequency part if of value.

We can perform a Fourier transform on a signal to break it down into its natural frequencies. We can also (or then) use a PCA model (Principal Component Analysis) to try to group the characteristics common to certain groups of signals together. Similarly, one can use a PLS (Partial Least Square) to use the principal components of a signal to calculate a useful end result.

In short, for the field of signal processing alone there is a wide range of mathematical tools that apply to certain situations. Not all tools can be applied in all situations. At Codotek Innovation, we dosystem engineering before starting to write code. So, before we code an algorithm, we make sure we have a good plan and know what we want to do.

What working method should I use?

First of all,system engineering is used to ensure that we understand the problem and its scope. In the simplest cases, this may be sufficient. However, the complex nature of most algorithms makes this rarely possible. Thesystem engineering helps to fully understand the problem, but it is often difficult to go deeper with this technique without taking (wasting?) a lot of time.

The ideal is to separate the algorithm into different parts and to do proof of concepts (POC) on each of them. You do this by writing code that you can quickly throw away. Warning ! There are two catches here. First, the code needs to be written quickly. We therefore do not waste too much time with the structure of the code. Second, you have to rewrite this code cleanly when you see fit. A good technique is to use different software or start a different project for each POC.

Artificial intelligence

Algorithms using AI, and especially deep neural networks, have been all the rage in recent years, but have been around since the 1950s. The mathematics of AI has evolved since then, but it is mostly increase in the computing power of computers which has made them progress.

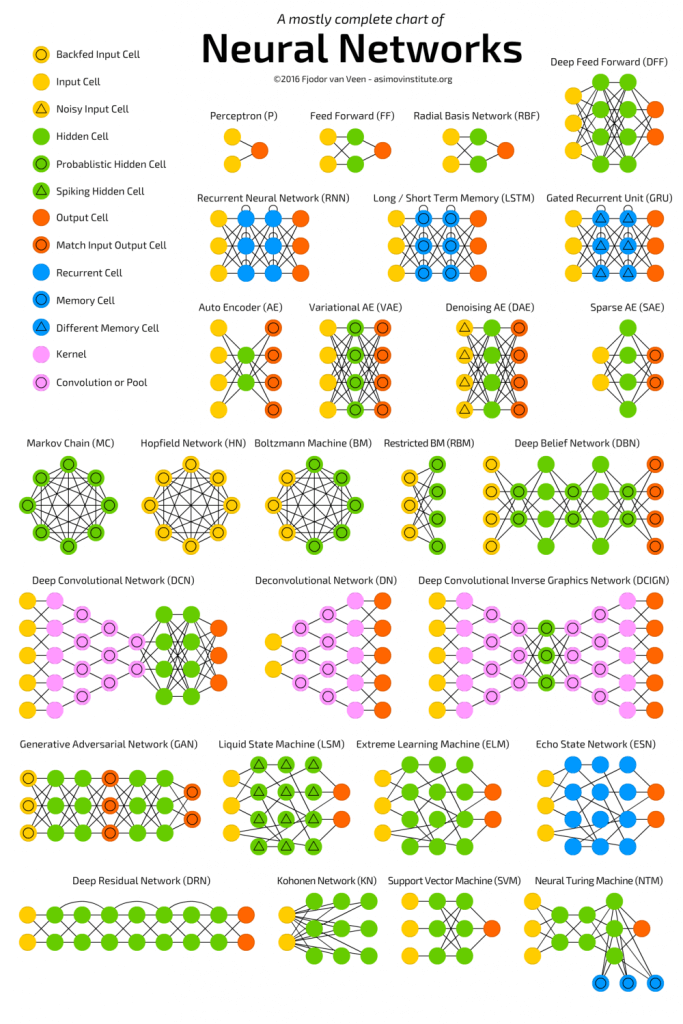

When we work on traditional algorithms, we use adapted mathematical tools. For IA, the tools will take the form of different neural structures tailored to the problem at hand. See the following figure which shows different basic architectures of neural networks. It is already recognized that networks of CNN types are suitable for signal analysis or that RNN types are suitable for voice recognition.

The work is far from being limited to identifying what type of backbone network to use. In traditional algorithms, the developer knows his tools, and he often knows in advance what effect a mathematical tool will have on the data. Here, we can have the same instinct on a certain project. However, it is often more difficult to do this since neural networks have so many variables. It is then difficult to understand the real functioning of the network. By extension, it is even more difficult to predict the effect on a different project. As the work progresses and the network refines, there is more talk of a meta-analysis of neural network architectures.

Importance of data

All the power of AI is in data. If you want to work with a neural network, you have to be able to collect tons of data on the system under study. The total amount of data needed usually depends on the complexity of the problem and the solution used. It is, a priori, difficult to determine. In fact, we can base ourselves on other completed projects. It is also possible to find similar projects on the web. In all cases, we must evaluate the number of classes, the number of parameters, the number of input characteristics and the number of variants of the problem.

The number of variations of the problem can be interesting because it can be used to do POCs for sub-problems. It can also allow, in the case where the overall problem seems too complex or requires too much data, to develop models for each of the variants. For example, instead of developing an AI that can detect cancer, we can develop an AI for lung cancer, another for blood, etc.

In all cases, rigorous data acquisition must allow full traceability. The right end result must accompany the raw data. In addition, we must make sure to save the context in which this data was captured. At first glance the context may seem superfluous, but as the project becomes more refined, the context becomes very important most of the time. Innovation Codotek can develop your custom data acquisition system to meet AI needs.

Not enough data?

Capturing a wide range of data with all solutions, in all contexts and all variations is often very expensive. For this reason, there are different methods of data augmentation. It is also possible to generate artificial data from traditional mathematics or even from other existing neural networks.

You have a project, contact us and we'll see how we can help you.